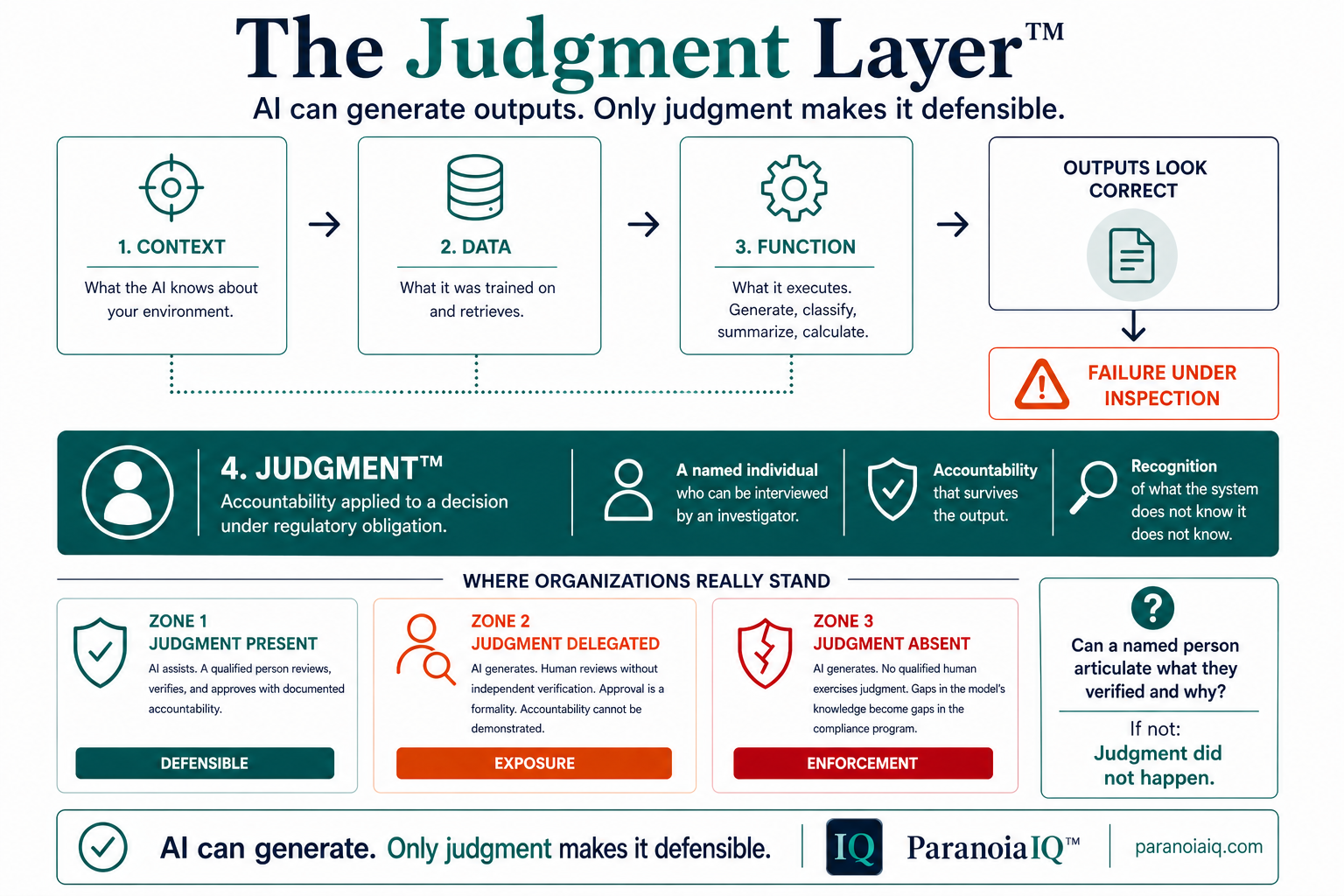

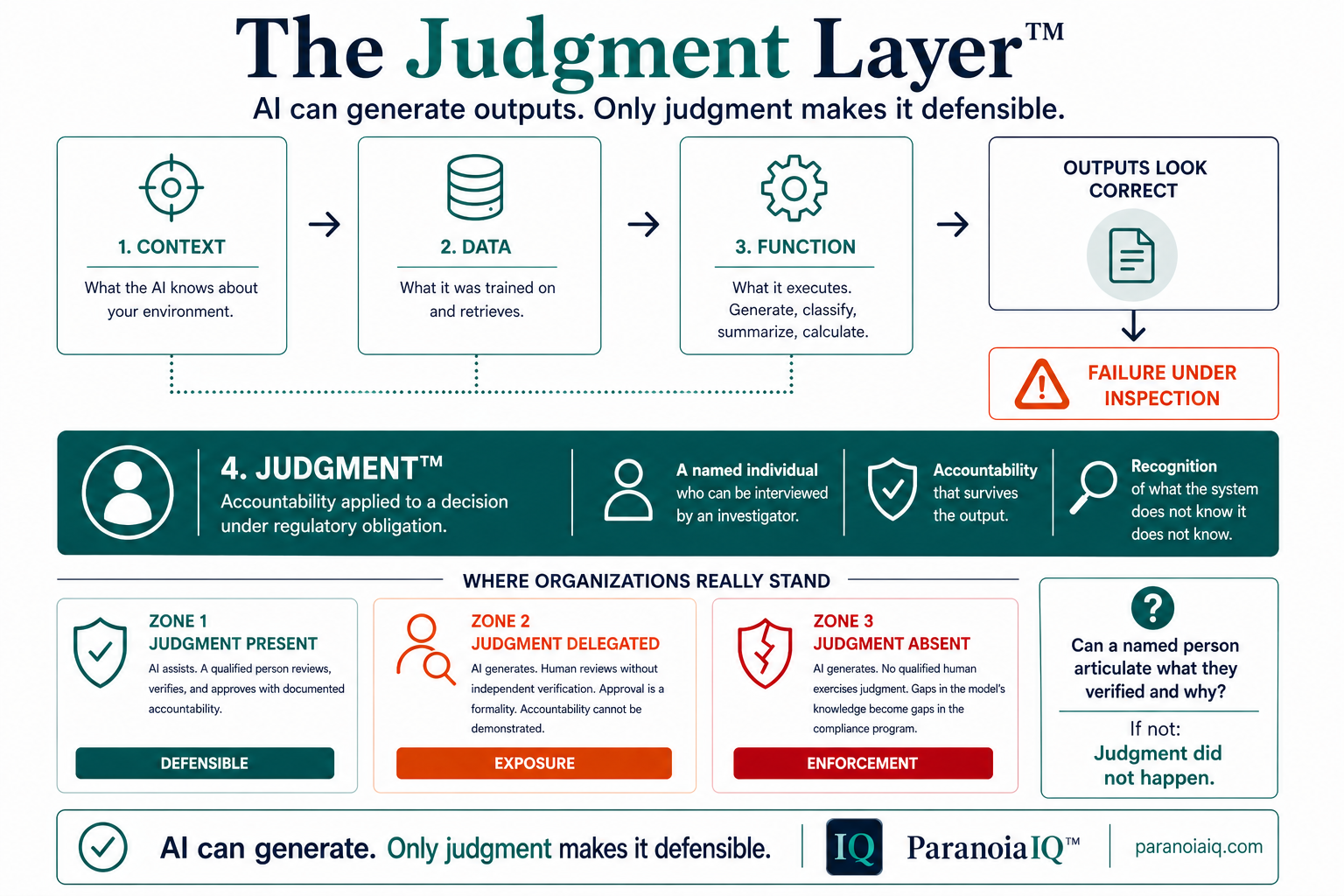

Context, data, and function produce outputs that look correct. Judgment is what makes those outputs defensible. It is the fourth layer of AI in regulated environments — and the only one FDA will enforce against when it is missing.

Everyone is talking about the three layers of AI: context, data, and function. FDA just told you they are not enough. Purolea Cosmetics Lab had all three. The warning letter came anyway.

Judgment is accountability applied to a decision under regulatory obligation.

The difference is not intention. It is whether a named person can articulate what they verified and why. If they cannot articulate it, judgment did not happen.

The organization never defined what judgment looks like, who exercises it, or how it gets documented. In that vacuum, behavior fills the gap. Sometimes well. Usually not. A structural judgment framework requires three things.

"Any output or recommendations from an AI agent must be reviewed and cleared by an authorized human representative of your firm's QU in accordance with section 501(a)(2)(B) of the FD&C Act."

AI can generate.

Only judgment makes it defensible.

You can validate context, data, and function. You cannot validate judgment into existence. The fourth layer is not a technology problem. It is a governance problem. And it is the layer FDA just enforced against.